The "Red Zone" Commit: Why Your AI Ethics PDF Will Fail in Production

Introduction: The Calendar Doesn't Lie

To a casual observer, it is just another day. But for Engineering Directors, CTOs, and Architects in healthcare, legal tech, and retail, the date marks a critical hard stop. We are only a few months away from August 2, 2026.

On that date, the full runtime obligations for High Risk AI Systems (Annex III) under the EU AI Act enter into force. The "grace period", where we could treat governance as a documentation exercise, is effectively over. We have entered the "Red Zone."

At DataMills, we are seeing a dangerous trend: Organizations are treating this as a policy problem. But regulators don’t grep through policies. Regulators audit system states.

Part 1: The Regulatory Stack – It’s Not Just a Tag

Based on the EU AI Act Enterprise Guide, compliance isn't a boolean flag you flip in your config file. It’s a categorization problem that affects your entire inference pipeline.

1. The "Prohibited" Trap (Article 5) Hard Blocks at Ingress

Many retail and workplace analytics companies think they are safe. But if your model creates "Biometric Categorization" (inferring race, political views, or emotion), you are hitting a Prohibited Practice.

- The Engineering Reality: This isn't a model training issue; it's an ingress issue. If your API gateway accepts a payload that allows emotion inference, your infrastructure is non compliant before the model even runs a forward pass.

- The Data Mills Solution: We build Input Guardrails at the API level. We sanitize the payload and block prohibited feature vectors before they reach the inference engine.

2. The "High Risk" Core (Annex III): The Monolith

This is where our clients in Healthcare (triage algos), Legal (contract review), and Retail (credit scoring) live.

- The Requirement: Strict "Conformity Assessments."

- The Translation: You need comprehensive observability, not just on system health (latency/uptime), but on decision logic.

3. General Purpose AI (GPAI) Dependency Hell

If you are wrapping a Foundation Model (like GPT 4 or Claude) via API, you are introducing a black box dependency. You are the deployer; you own the risk of that downstream hallucinatory behavior.

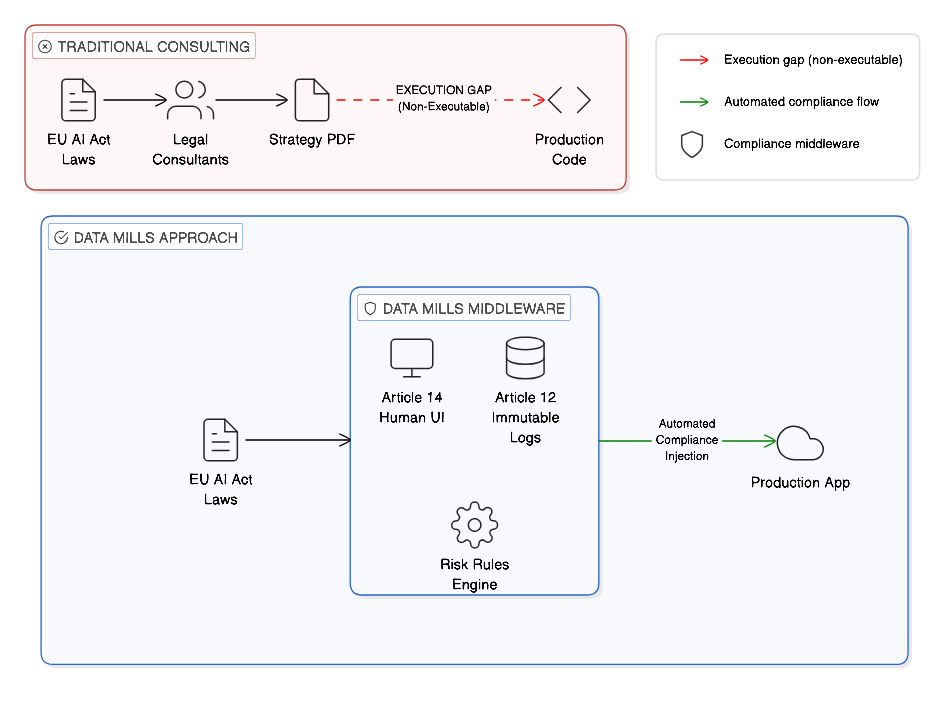

Part 2: The "Consultant Gap" (Or: Why PDFs Don't Compile)

The single biggest failure point in 2026 is the disconnect between the General Counsel and the DevOps team.

In the traditional corporate structure, Legal hires consultants. They map out requirements like Article 14 (Human Oversight) and hand a 100 page PDF to the CTO.

Then, that PDF hits the backlog. And it dies there. This is the "Consultant Gap."

When this gap exists, you get "Shadow Compliance." The policy says one thing; the production code does another.

Data Mills functions as the middleware. We provide the Builder Bridge. We translate "Regulatory Risk" into "API Endpoints," "Immutable Loggers," and "State Management" that actually compiles.

Part 3: Article 14 is a State Management Problem

Let’s look at the requirement that breaks 90% of the architectures we review: Human Oversight (Article 14).

The Law says: High risk AI systems shall be designed. so that they can be effectively overseen by natural persons.

You cannot "oversee" a system if the inference pipeline doesn't expose its internal state (confidence intervals, attention weights) in real time. If you don't have a "Stop Button" (Kill Switch) hard coded into the loop, you are running an unmanaged process.

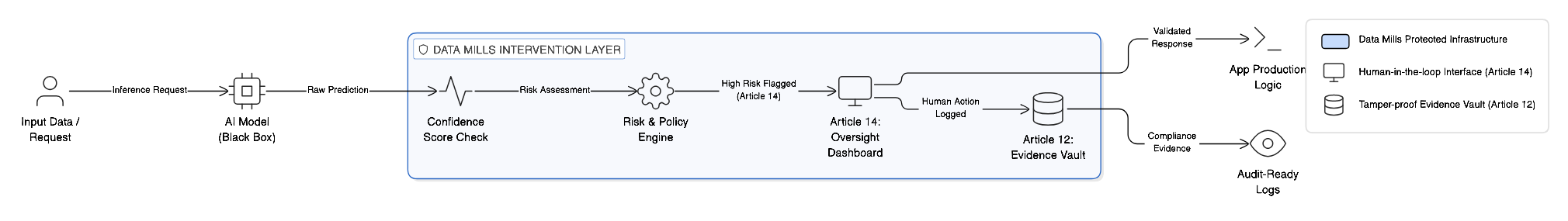

The Data Mills Solution: The Intervention Layer

We deploy a distinct microservice layer that sits between your model and the final output. As shown in the diagram:

- Confidence Monitor: We tap into the model's output layer. If the probability score drops (e.g., < 0.88), we trigger an event.

- The Human Override Node: This isn't an email alert. It's a routing logic that redirects the request to a human queue.

- The Kill Switch: A functional circuit breaker.

- Synchronous Logging: The action of the override is serialized and written to the audit log instantly.

Part 4: Article 12 – Why console.log Won't Survive Court

Another massive failure point is Record Keeping (Article 12).

Most engineering teams rely on ephemeral logs—Datadog streams, CloudWatch, or simple console.log. These are designed for debugging. They are unstructured, they rotate out every 30 days, and they are noisy.

The EU AI Act requires Traceability.

If a regulator asks why your AI denied a loan three years ago, showing them a grep of a deleted log file is not a defense.

Digital Forensics, Not Just Logging

The Data Mills infrastructure implements Immutable Audit Trails. We treat every inference event as a forensic artifact.

- Model Version Hash: We log the SHA 256 hash of the specific model weights used at that millisecond.

- The Input Snapshot: The raw tensor data or JSON payload the model "saw" (before normalization).

- The Logic Path: Which decision tree branch or vector cluster was activated?

- The Human Operator: Who was authorized in the loop?

We write this to WORM (Write Once, Read Many) storage. This creates a Log That Survives Court.

Part 5: The "Build vs. Buy" Trap (NIH Syndrome)

We are six months out. The instinct for every Senior Engineer is: "I can build this. It’s just a logging wrapper and a frontend switch."

I know you can. But should you?

- Do you want your Senior ML Engineers building "Compliance ETL Pipelines"?

- Do you want your Frontend Architects designing "Regulatory Modals"?

- Or do you want them optimizing the core model and reducing inference costs?

Data Mills exists to offload "Compliance Debt." We are the plumbers for the high stakes industries—Healthcare, Legal, Retail. We maintain the verified infrastructure so your team can focus on the product logic.

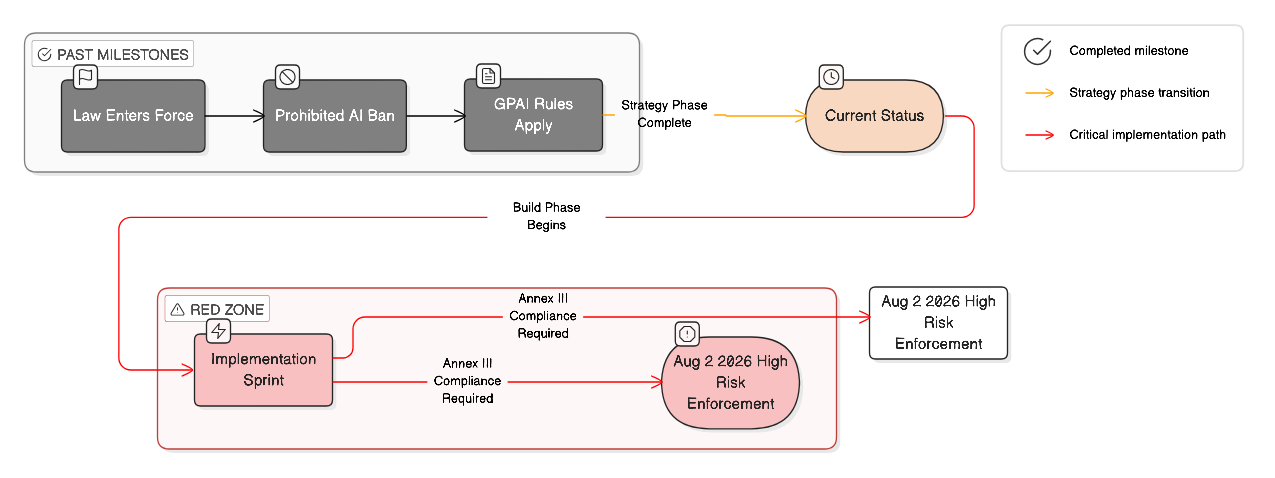

Conclusion: The "Red Zone"

As our timeline indicates, the window for "Strategy" has closed. We are now in the Implementation Sprint.

August 2024: The Act entered into force.

Today (Feb 2026): We are in the final sprint.

August 2, 2026: The Deadline.

If your AI makes consequential decisions, you are sitting on unmanaged risk. The regulations are complex, but the architecture doesn't have to be. You don't need another roadmap. You need code that passes the test suite.

At Data Mills, we bridge the gap. We turn the liability of High Risk AI into the asset of Operational Resilience.