Operationalizing Legal AI Compliance Under the EU AI Act

TL;DR: A legal-technology platform using AI for document automation and case triage was classified as a high risk system under the EU AI Act (used in the administration of justice). Its original design lacked mandatory compliance controls: there was no formal risk management, no robust data governance or bias mitigation, no audit logging, minimal explainability, and no human-in-the-loop oversight. Datamills redesigned the architecture and processes to fill these gaps. The solution added a comprehensive risk-management framework (Article 9), strict data-quality checks (Article 10), full technical documentation (Annex IV/Article 11), runtime audit logging (Article 12), human override and “stop” controls (Article 14), and accuracy/robustness safeguards (Article 15).The system shifted from reactive compliance to inspection ready AI infrastructure, and compliant with the EU AI Act.

Problem

The organization uses AI/ML to automate legal document processing and case triage. Under the EU AI Act, AI systems used in administration of justice are explicitly deemed high-risk. High-risk classification triggers strict obligations: risk management, data quality, logging, documentation, transparency, human oversight, and robustness. The original system violated many of these requirements:

· No Risk Management (Art.9): The system lacked a systematic risk-analysis process. There was no hazard log or plan to identify legal liability or bias risks, and no mitigation measures, contrary to Article 9’s demand for a risk management system.

· Poor Data Governance (Art.10): Training and validation data were not vetted for fairness or completeness. Article 10 requires that all datasets be relevant, representative, error-free and complete to avoid biased outcomes. The company's pipeline used unbalanced data from past cases without systematic bias checks or labeling audits, risking discriminatory outputs.

· Lack of Documentation (Art.11 & Annex IV): There was no comprehensive technical dossier. Article 11 mandates full documentation (Annex IV content) covering system purpose, design, data, test results, risk mitigations, etc.

· No Audit Logging (Art.12): The system did not record its actions. Article 12 requires automatic logging of all high-risk AI events for traceability. Without logs (inputs, outputs, decision paths, user IDs).

· Missing Human Oversight (Art.14): The AI decisions were fully automated. Article 14 demands that humans must be able to monitor, override or stop the AI’s decisions. The system had no “stop button” or human review path, violating this requirement.

· Insufficient Accuracy & Security (Art.15): There were no mechanisms to guarantee consistent accuracy, error resilience, or attack resistance. The system had no backup fail-safes or adversarial-defense measures, contrary to Article 15’s robustness and cybersecurity rules.

In short, “pre-compliance” architecture was a simple AI inference pipeline with minimal controls

Approach

Datamills audited the system against EU AI Act requirements and mapped regulatory obligations directly to architectural gaps in line with the Act’s high-risk system provisions.Instead of treating compliance as documentation, the team redesigned the system so compliance controls would operate continuously at runtime.

Key interventions included:

- Risk Management Integration

Introduced a formal risk register and monitoring loop to track bias, safety, and data risks throughout the AI lifecycle, aligning with Article 9 requirements for ongoing risk management. - Data Governance Controls

Added validation layers to assess dataset quality, detect drift, and flag representational imbalances before model updates, supporting Article 10 obligations on data quality and governance. - Audit Logging Infrastructure

Built a scalable logging pipeline capturing every model input, output, and decision event to ensure traceability and record-keeping as required under Article 12. - Automated Documentation

Implemented tooling to generate technical documentation directly from system architecture, datasets, and model metadata, ensuring alignment with Article 11 and Annex IV documentation requirements. - Human Oversight Controls

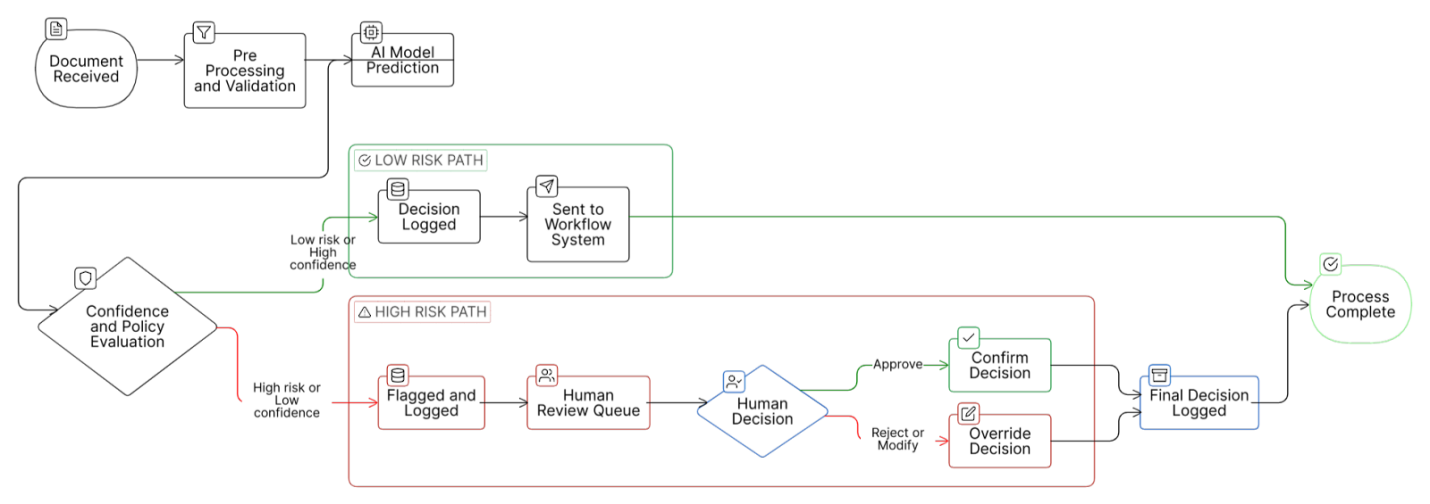

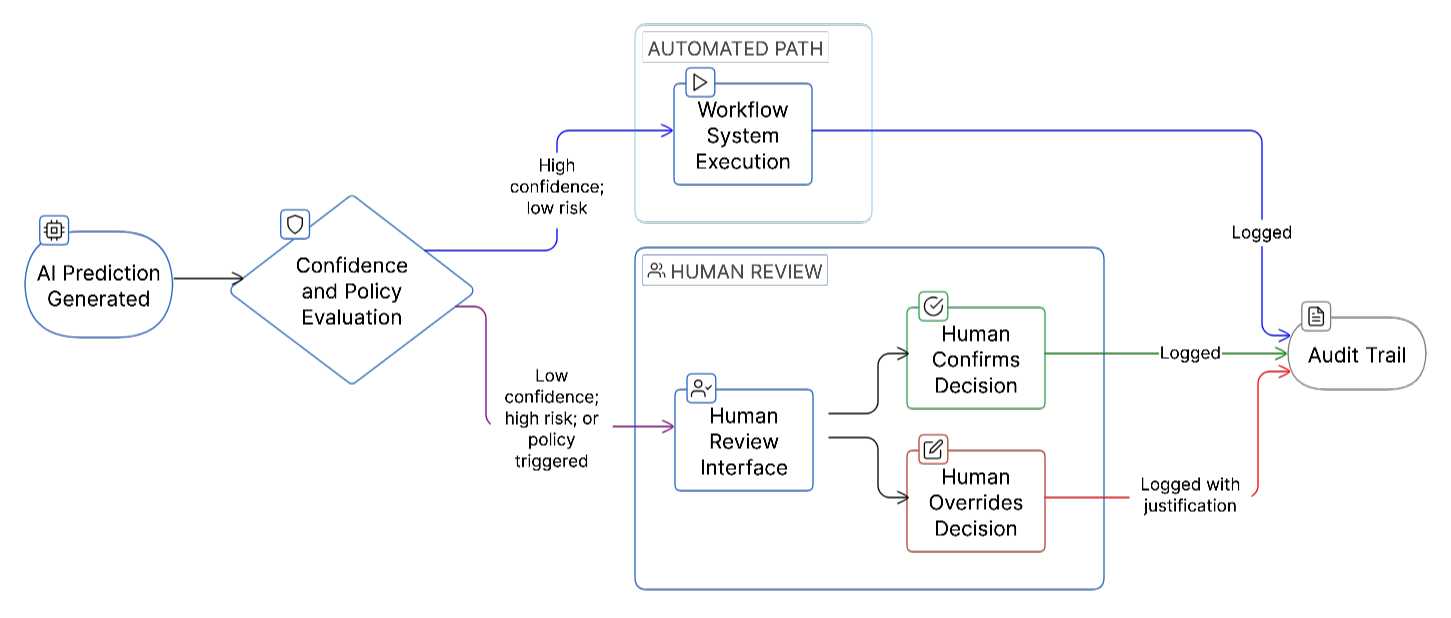

Added confidence thresholds and escalation logic so sensitive or uncertain predictions trigger professional review, fulfilling the human oversight provisions of Article 14. - Robustness & Security Safeguards

Embedded runtime monitoring, fallback logic, and hardened API layers to improve resilience, accuracy, and cybersecurity in accordance with Article 15.

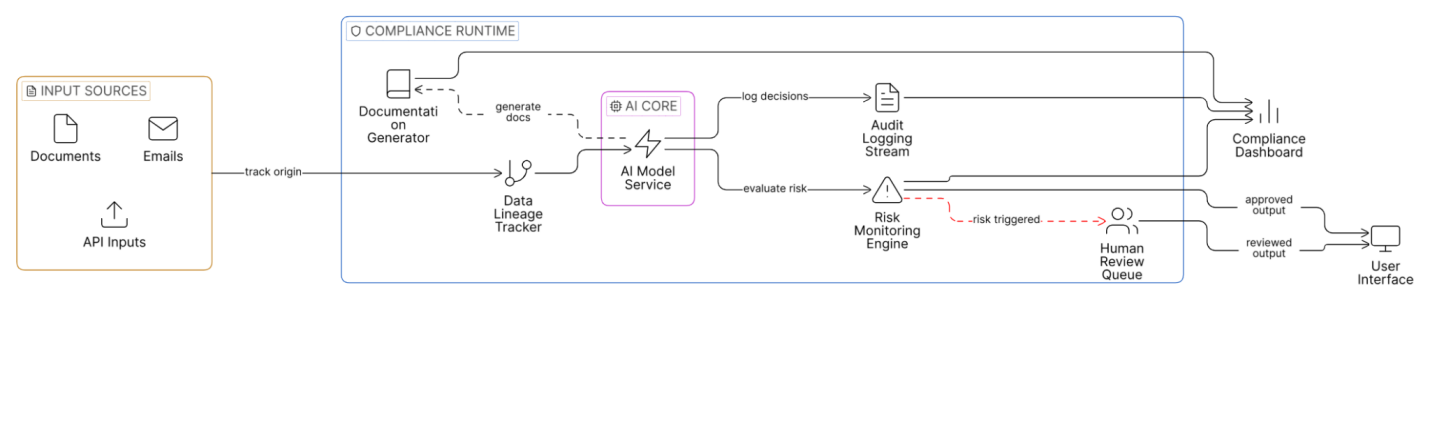

Together, these changes formed a compliance runtime layer around the AI system, transforming it into an architecture that continuously enforces regulatory requirements rather than reacting to audits.

Solution Delivered

Datamills redesigned the platform into a compliance-centric AI system, embedding governance controls directly into runtime operations rather than layering them on afterward.

Key enhancements included:

- Revised Architecture

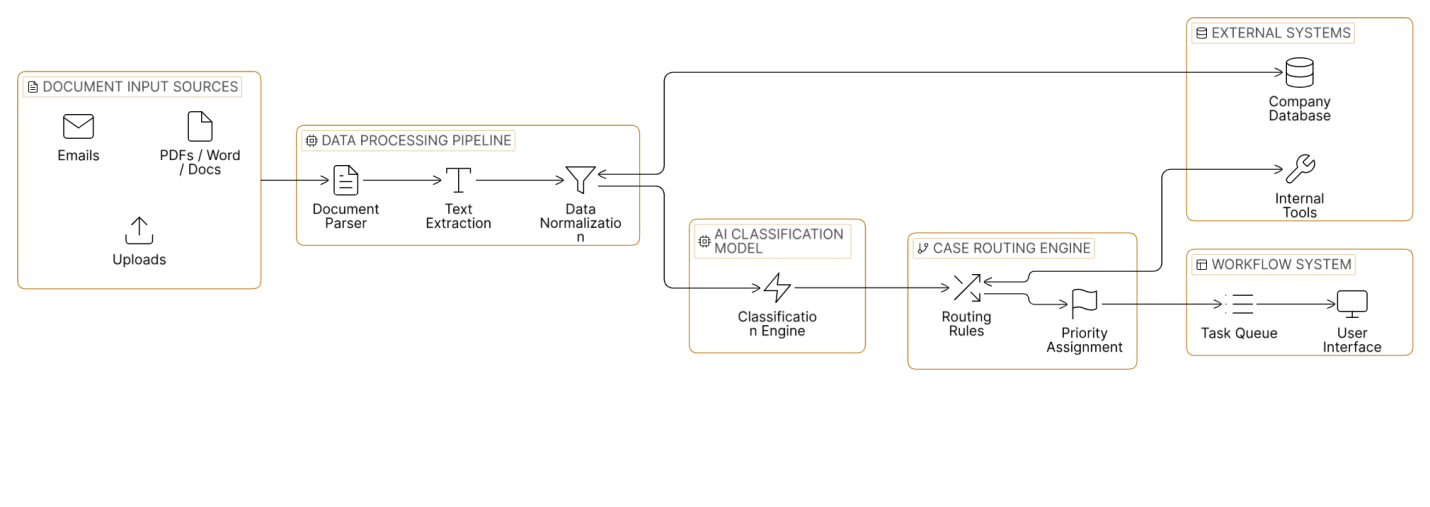

The original monolithic pipeline was decomposed into modular services. Logging pipelines, governance controls, and a compliance orchestrator now sit around the AI model, ensuring every input, output, and decision carries metadata and risk context. - Runtime Compliance Logging

Each inference now produces structured log events including inputs, predictions, confidence scores, user context, and policy flags. These are stored in an immutable audit trail, enabling full decision reconstruction and regulatory reporting in line with Article 12 requirements.

- Explainability & Reporting

Predictions are accompanied by contextual explanations and surfaced to reviewers. Automated reports compile model performance metrics and evaluation results, supporting Annex IV requirements for performance validation and outcome transparency. - Human Oversight & Escalation

Low-confidence or sensitive cases are routed to qualified professionals through a review interface where outputs can be inspected, confirmed, or overridden. This ensures users retain control over outcomes as required under Article 14.

- Automated Technical Documentation

System documentation is now generated directly from architecture definitions, datasets, validation outputs, and model metadata. This ensures documentation remains current and audit-ready, satisfying Article 11 and Annex IV obligations. - Operational Enablement

Teams were trained to interpret risk signals, manage escalations, and operate the compliance dashboards, ensuring governance processes function effectively in practice.

Together, these changes transformed the platform into a compliant high-risk AI system. Compliance controls now operate continuously at runtime, while documentation and audit trails remain permanently inspection-ready, aligning the system with EU AI Act obligations across Articles 9–15 and Annex IV.

Outcomes

The platform now operates as a fully compliant high-risk AI system with governance embedded into daily operations rather than treated as a periodic audit exercise.

Key outcomes include:

- Regulatory Alignment The system now satisfies EU AI Act high-risk obligations, with built-in audit trails and documentation pipelines supporting inspection readiness and conformity requirements.

- Improved Trust & Transparency Explainability layers and decision logging provide visibility into how outcomes are generated, reducing “black-box” concerns and strengthening accountability across workflows.

- Reduced Risk Exposure Bias monitoring, validation controls, and human review paths significantly lower the likelihood of incorrect or unsafe outputs, reducing legal and reputational risk.

- Operational Efficiency Automated logging and documentation mean compliance evidence is produced continuously rather than assembled manually, allowing teams to generate reports quickly and simplify audit preparation.

- Future-Ready Architecture By embedding governance directly into the system design, the platform is positioned to adapt to evolving regulatory requirements, including expanded transparency and explainability expectations under the EU AI framework.

Overall, these improvements shifted the platform from AI-enabled automation to regulated AI infrastructure, enabling the organization to scale AI adoption with confidence while maintaining regulatory readiness.

Technical Notes

Implementation focused on ensuring compliance controls operated reliably at scale without slowing production workflows.

- Service-Oriented Architecture – The system runs as separate services for inference, compliance enforcement, logging, and UI. A middleware compliance engine evaluates each request before results are returned, ensuring enforcement happens inline rather than post-processing.

- Event-Based Audit Pipeline – All system interactions stream into an indexed logging backbone, allowing reconstruction of decisions across services and time windows while supporting high ingestion volumes and long-term retention.

- Linked Decision Records – Structured logs use correlation IDs so inputs, predictions, human interventions, and final outputs remain traceable as a single lifecycle record, supporting Article 12 traceability requirements.

- Integrated Explainability Storage Feature-attribution outputs are stored alongside decisions so reviewers and auditors can see both the prediction and its supporting signals in one place.

- Continuous Validation Hooks Training pipelines include automated checks for dataset completeness and bias indicators before deployment, supporting Article 10 data governance expectations.

- Secure Runtime Environment The system operates within a hardened environment using encrypted storage, validated inputs, and sandboxed inference execution to maintain robustness and cybersecurity alignment.

Client Perspective

“Thanks to Datamills, our platform is now legally rock-solid. The new compliance pipeline and audit logs give us confidence in every AI-driven recommendation. We can demonstrate to our clients and regulators that we’ve met the EU AI Act requirements end-to-end,” said Platform’s CTO.