Operationalizing High-Risk Diagnostic AI for National Healthcare Networks

Operationalizing High-Risk Diagnostic AI for National Healthcare Networks

Overview

Healthcare providers are rapidly scaling AI across radiology and emergency triage to improve patient outcomes. However, under the EU AI Act, diagnostic AI is classified as high-risk, requiring rigorous, system-enforced controls. For large healthcare networks, the transition from "experimental AI" to "regulated medical infrastructure" is a massive hurdle. Traditional manual compliance checks are no longer sufficient to meet the strict demands of clinical safety and legal accountability.

The Challenge

A national healthcare network was scaling AI-assisted diagnostic tools across multiple departments. While the models showed strong clinical performance, the organization faced a critical regulatory roadblock. Their compliance controls were fragmented and "policy-based" existing in hand-books and PDFs rather than being hard-coded into the systems. With a looming regulatory deadline, the provider faced the risk of being unable to deploy life-saving tools due to an inability to prove real-time auditability and safety.

Structural Inefficiencies and Critical Gaps

Upon analysis, the network’s AI infrastructure suffered from three systemic failures that threatened its "High-Risk" certification:

- The "Policy vs. Practice" Gap: Compliance existed only as a legal checklist, not as a system-enforced gate. Audits were entirely reactive, requiring clinicians and engineers to stop work for weeks to manually assemble evidence.

- The Data Lineage Vacuum: There was no bi-temporal tracking of datasets. If a regulator asked, "Why was Patient 447 diagnosed this way based on the model state last March?", the system could not reconstruct the specific training context or decision logic at that historical point.

- The Clinical Trust Deficit: Clinicians raised significant concerns regarding "black-box" outputs and automation bias. Without a structured, auditable human-in-the-loop (HITL) workflow, there was a high risk of "automation bias" where AI suggestions were followed without sufficient critical override.

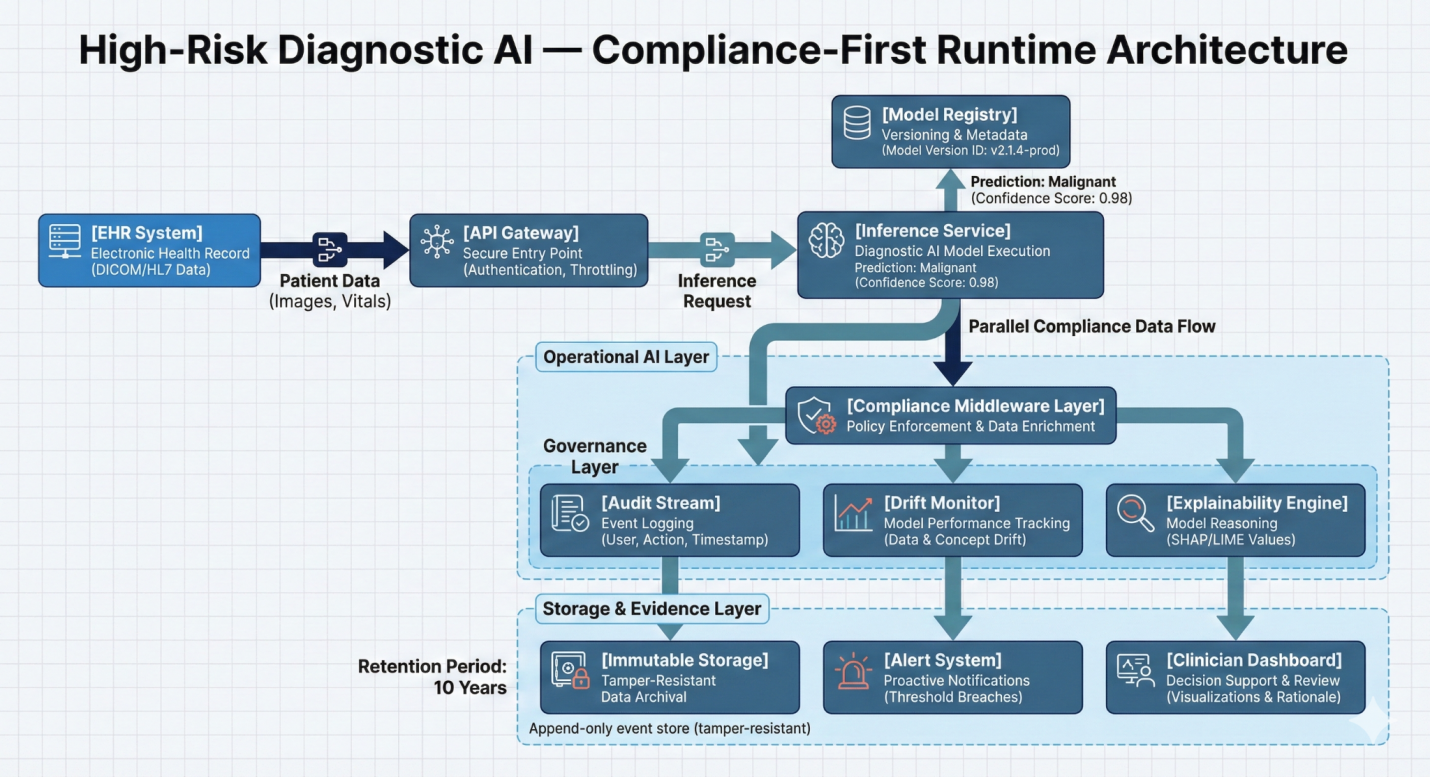

High Risk Diagnostic AI Compliance-Runtime Architecture

The Economic and Procedural Reality

Under the existing manual framework, audit preparation took 4 to 6 weeks per system. This made scaling AI across a national network financially and operationally impossible. Furthermore, model drift detection was slow, taking days to identify performance drops, which increased the risk of clinical errors and malpractice exposure.

The Opportunity

Modern healthcare requires a shift from viewing compliance as a "legal hurdle" to treating it as a "Production Capability." By embedding compliance directly into the AI lifecycle, the network could move from reactive documentation to a state of permanent, real-time "inspection readiness."