Healthcare, Operationalizing High-Risk Diagnostic AI Under the EU AI Act

TL;DR

An European healthcare provider deploying AI-assisted diagnostic tools needed to meet high risk requirements under the EU AI Act. Datamills embedded compliance infrastructure directly into production systems, enabling continuous risk monitoring, real-time auditability, and enforced human oversight. Audit preparation time dropped from 4-6 weeks to 72 hours, and systems became inspection-ready at all times.

Problem

The organization was scaling AI across radiology and emergency triage. Model performance was strong, but compliance controls were fragmented and largely policy based rather than system enforced.

- Continuous risk monitoring (Article 9)

- Data lineage and representativeness controls (Article 10)

- Automated technical documentation (Article 11 / Annex IV)

- Immutable operational logs (Article 12)

- Structured human oversight workflows (Article 14)

Documentation was fragmented, audits were reactive, and clinicians raised concerns about automation bias and “black-box” outputs. Compliance existed in policy form not in system architecture.

Approach

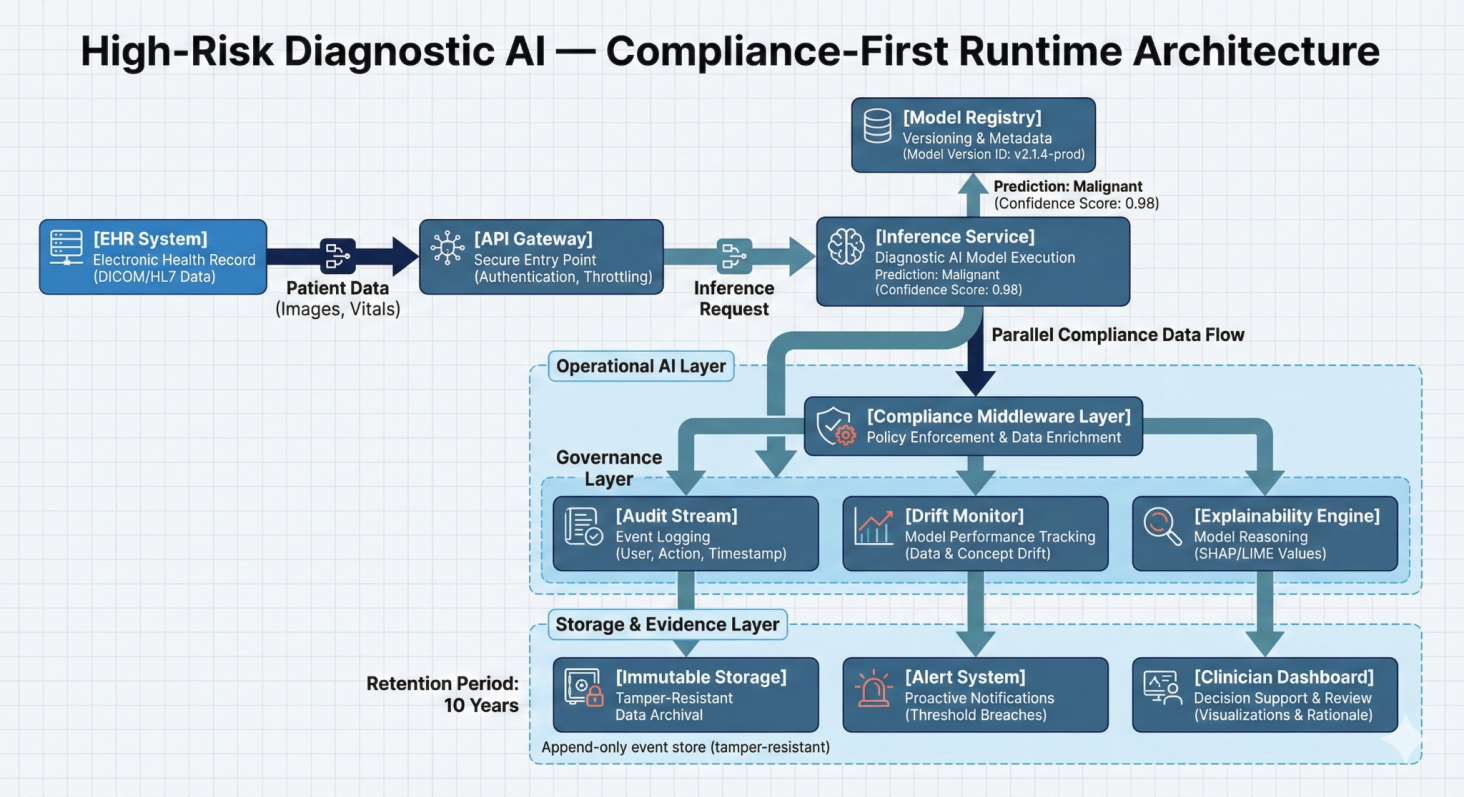

High Risk Diagnostic AI Compliance-Runtime Architecture

Datamills embedded an engineering-led compliance sprint directly into the AI lifecycle. Rather than generating documentation after deployment, we instrumented the system so compliance evidence is produced automatically at runtime.

A shared evidence layer was introduced across model training, deployment, and inference ensuring traceability, oversight, and regulatory alignment were built into production architecture.

Solution Delivered

Immutable Audit Stream

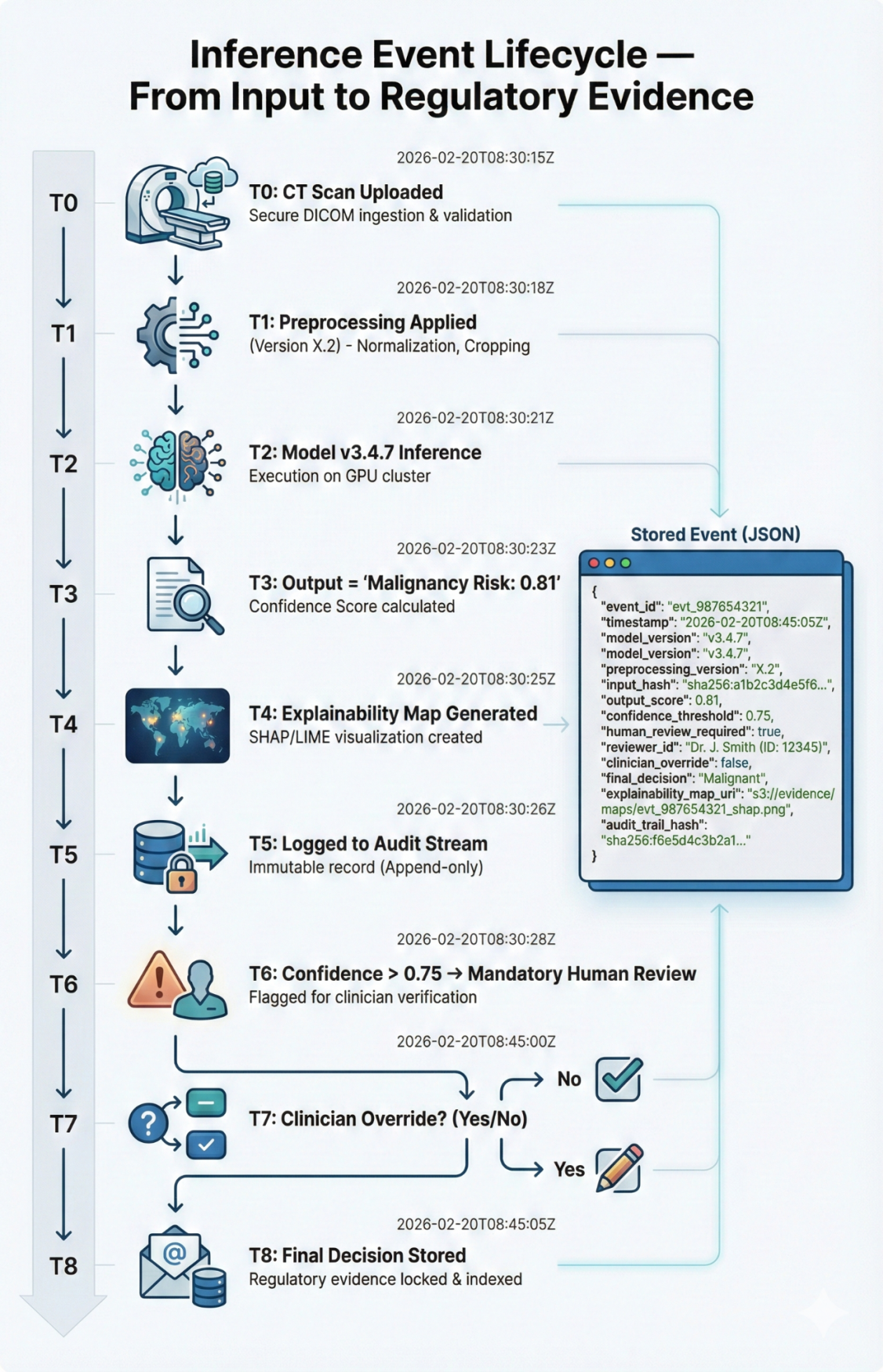

Append only logging captured model inputs, outputs, confidence scores, version IDs, and clinician overrides. All diagnostic decisions became fully traceable.

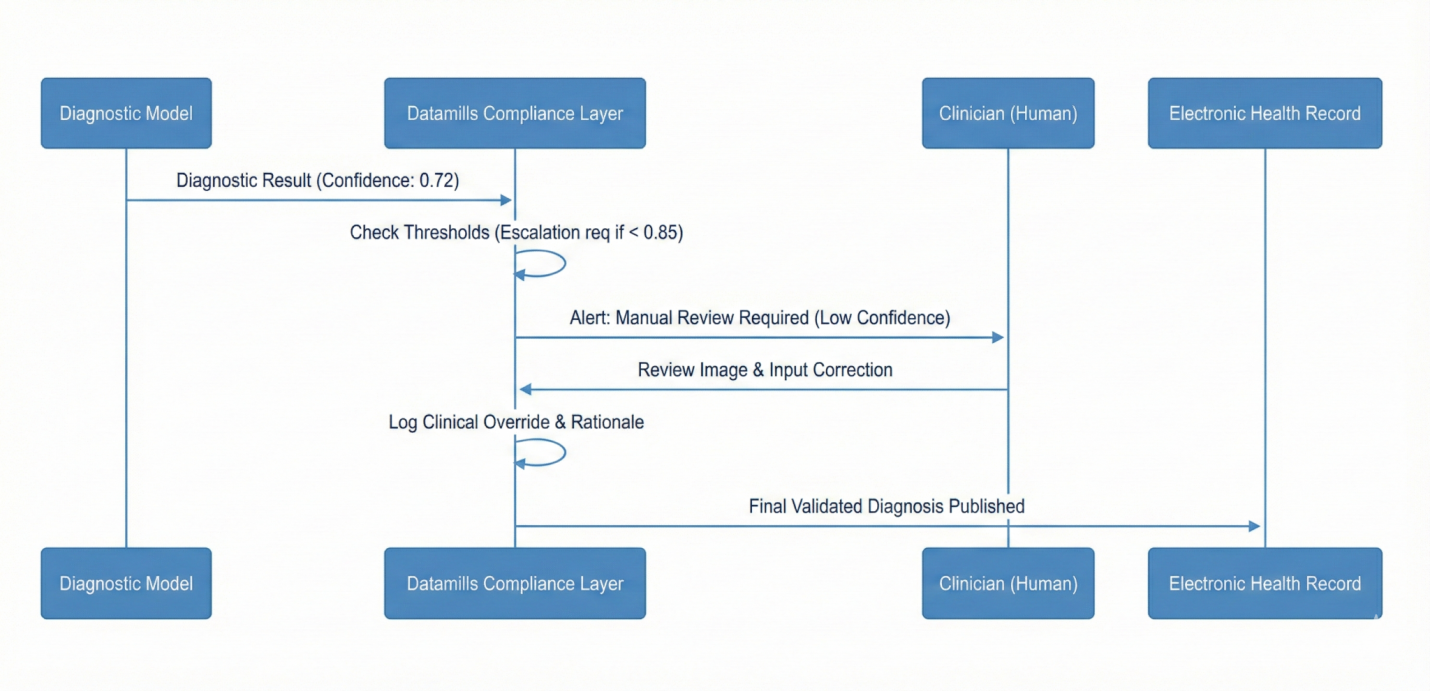

Inference Event Lifecycle Diagram

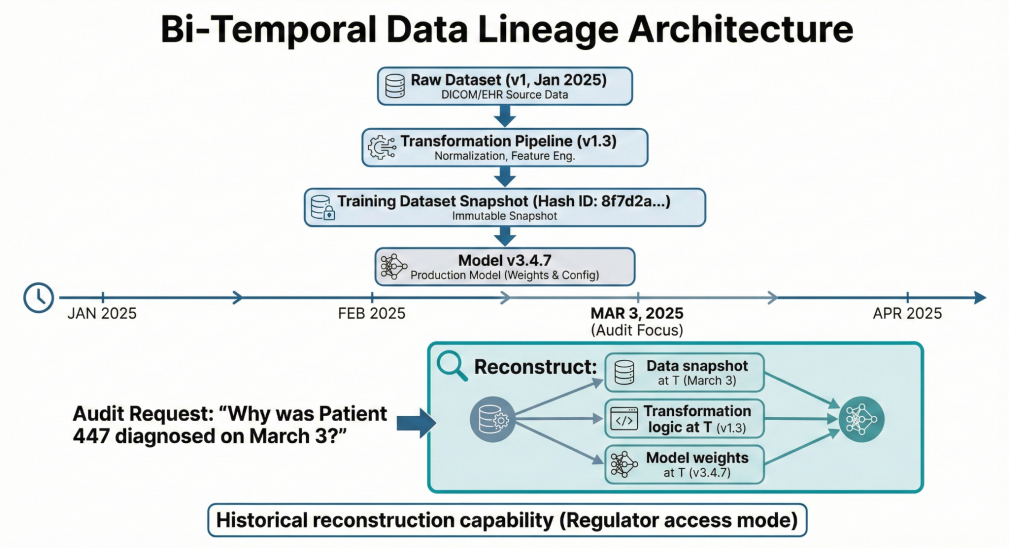

AI-Ready Data Lineage

Bi-temporal dataset tracking enabled reconstruction of training states and decision contexts at any historical point.

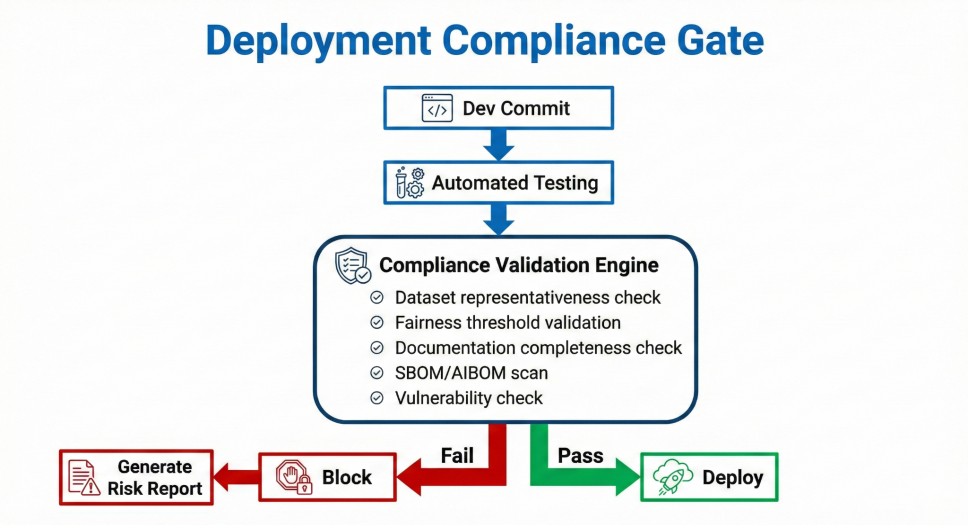

Automated Annex IV Documentation

Technical documentation was generated directly from DevOps repositories, linking architecture diagrams, performance metrics, and model inventories.

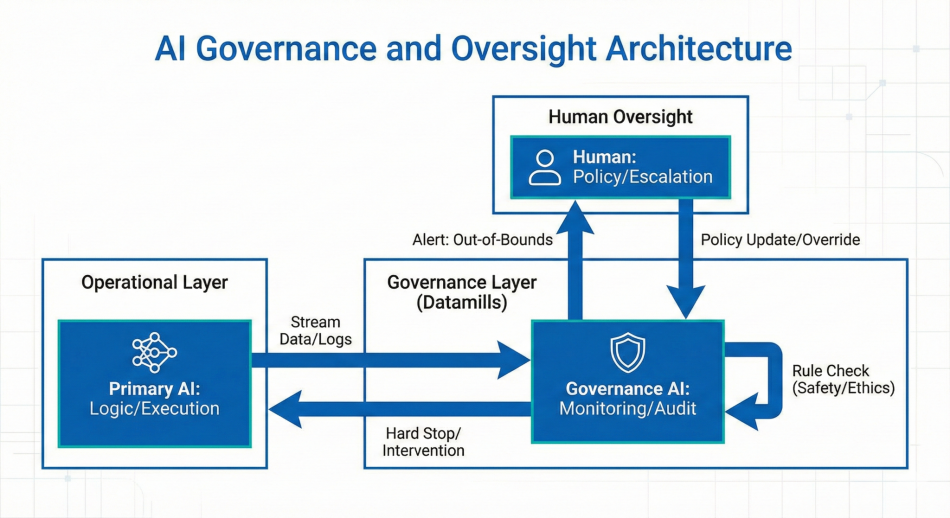

Human in the Loop Escalation Logic

Threshold-based controls required clinician confirmation for high-risk predictions. Low confidence outputs triggered automatic review and override logging before EHR publication.

Timeline Diagram

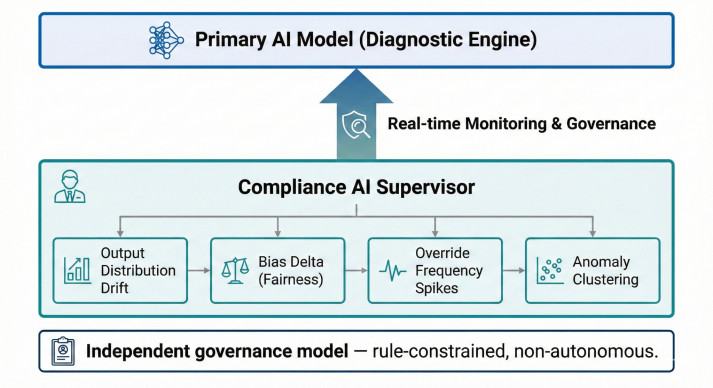

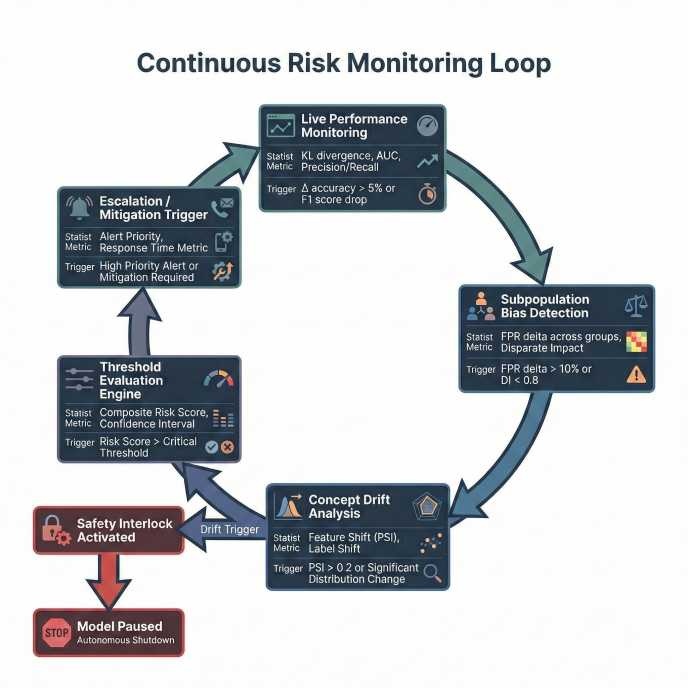

Bias & Drift Monitoring

Continuous subpopulation performance monitoring detected distribution shifts. Automated safety interlocks paused deployment if disparity thresholds were exceeded.

Outcomes

- Audit preparation time reduced from 4–6 weeks → 72 hours

- Model drift detection reduced from days → under 24 hours

- Regulatory documentation always inspection-ready

- Reduced malpractice exposure through full decision traceability

Technical Notes

Kafka backed audit streaming, automated documentation pipelines, CI/CD compliance gating, explainability overlays integrated into EHR interface, Zero Trust access controls.

Client Perspective

“Compliance shifted from a legal checklist to a production capability. We now deploy AI knowing it can withstand regulatory scrutiny.”