TL;DR

A 12-hospital IDN deployed a sepsis early warning system processing 50,000+ data points hourly. DataMills transformed their "black box" AI into a forensic-grade clinical asset: WORM-encoded snapshots enable 3-year litigation reconstruction, the Intervention Layer reduced false alerts by 60% while ensuring 100% human validation of high-risk cases, and Technical Nutrition Labels increased clinician trust from 42% to 81%. Compliance moved from policy documents to enforced infrastructure.

Key Performance Indicators

The following table summarizes the transformation achieved through the implementation of the DataMills framework:

Metric | Baseline | Post-Implementation | Improvement/Value |

|---|---|---|---|

Audit reconstruction time | 3 years | (<2 seconds) | 99.9% Faster/Litigation Readiness |

False alert reduction | N/A | 60% | 2,400 fewer interruptions/shift |

Clinician trust score | 42% | 81% | Increased adoption and efficacy |

Implementation duration | N/A | 10 weeks | Rapid deployment |

End-to-end latency | N/A | <120ms | Real-time decision support |

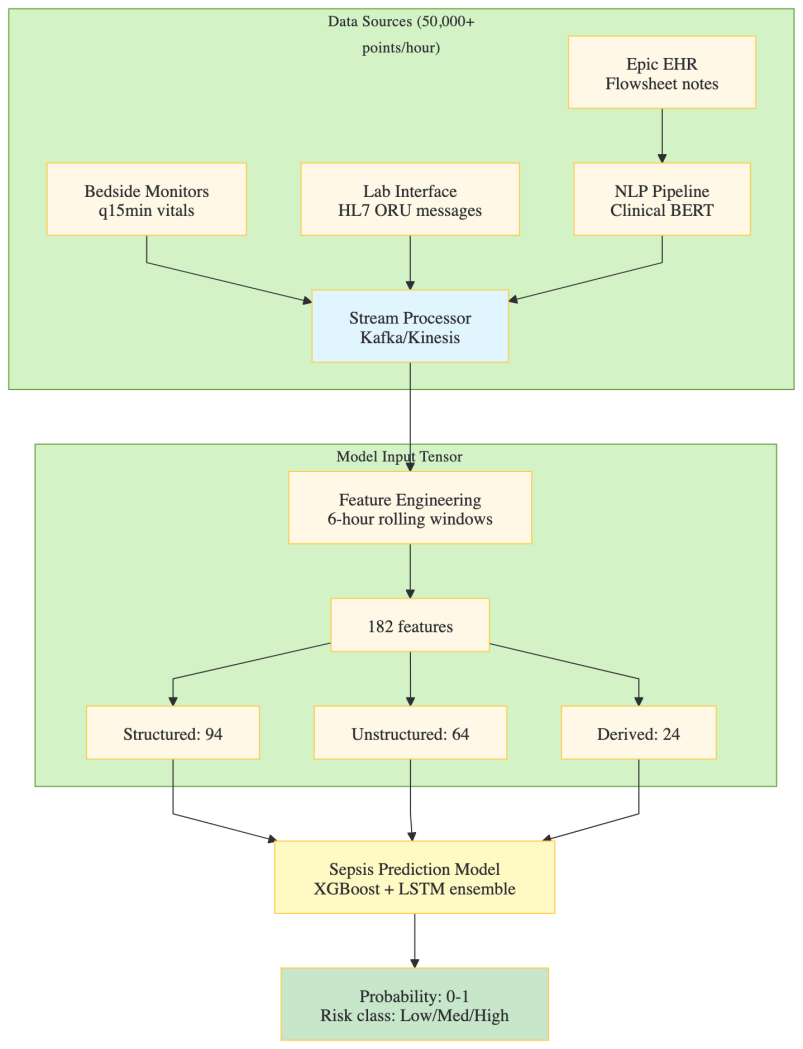

The AI System: Sepsis Early Warning System (EWS)

Input Architecture:

Model Specifications

- Architecture: Gradient-boosted decision trees (XGBoost) for structured data augmented with Long Short-Term Memory (LSTM) networks for temporal sequences

- Training Dataset: 89,000 confirmed sepsis cases, spanning the period 2019-2024

- Validation AUC-ROC: 0.87 (Retrospective Analysis), 0.84 (Prospective Pilot Implementation)

- Inference Protocol: Real-time, event-triggered upon the acquisition of any new laboratory result or vital sign measurement

- Prediction Horizon: Six hours preceding the onset of septic shock

- Epic Integration Mechanism: Implementation via Best Practice Alerts (BPA) necessitating an interruptive clinical workflow

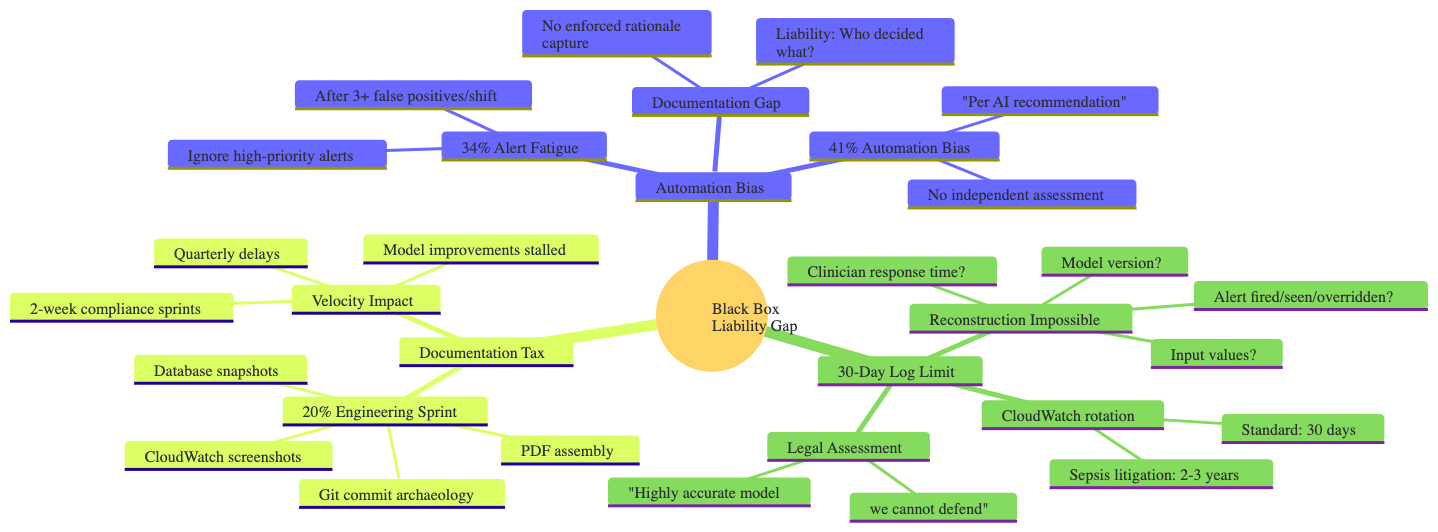

The Crisis: The "Black Box" Liability Gap

The highly accurate Sepsis Early Warning System (EWS) suffered from three critical structural failures that converted clinical speed into legal liability and operational friction. This "Black Box" liability gap made the model virtually indefensible in litigation, despite its predictive power.

Three Structural Failures

Automation Bias & Alert Fatigue

The design promoted an over-reliance on the AI's output, leading to two major human factors problems:

- Alert Fatigue: After experiencing multiple false positives per shift, clinicians developed a 34% rate of ignoring high-priority alerts.

- Automation Bias: Clinicians relied on the AI's "recommendation" without independent assessment, documenting actions as "Per AI recommendation," which offloaded clinical accountability without enforcing rationale capture.

Documentation Gap

The system lacked an enforced mechanism for capturing the AI's rationale, the clinician's decision, and the exact state of the EMR at the time of the alert.

- Liability: Without this information, legal teams could not determine who decided what and based on what evidence.

30-Day Log Limit

The standard cloud infrastructure logging policy (e.g., CloudWatch) defaulted to a 30-day rotation.

- Sepsis litigation often has a 2-3 year discovery window.

- Reconstruction Impossible: After 30 days, essential forensic data—including the exact model version, input values, and whether the alert was fired, seen, or overridden—was permanently lost.

Legal Assessment

The verdict from the IDN's legal team was: "Highly accurate model we cannot defend."

Documentation Tax & Velocity Impact

The effort to achieve rudimentary compliance created significant organizational friction:

- Documentation Tax: 20% of the Engineering Sprint was spent on manual compliance tasks (Git commit archaeology, database snapshots, CloudWatch screenshots, PDF assembly).

- Velocity Impact: 2-week compliance sprints imposed quarterly delays, stalling model improvements and hampering feature velocity.

The Litigation Timeline Problem

The disconnect between the clinical event timeline, legal statutes, and data retention policies created an Evidence Gap.

Timeline Phase | Duration | Standard Data Retention | Issue |

|---|---|---|---|

Sepsis Event to Discovery Begins | 2-3 years | 30 days (AI Logs) | The critical gap: 882 days where the AI's decision-making process was completely unrecoverable. |

Statute of Limitations | Varies | N/A | Legal action often starts long after technical logs are purged. |

The DataMills Implementation: From Alerts to Evidence

The DataMills framework was deployed to close the liability gap, transforming the EWS from a high-velocity alert system into a forensic-grade, clinically auditable evidence engine.

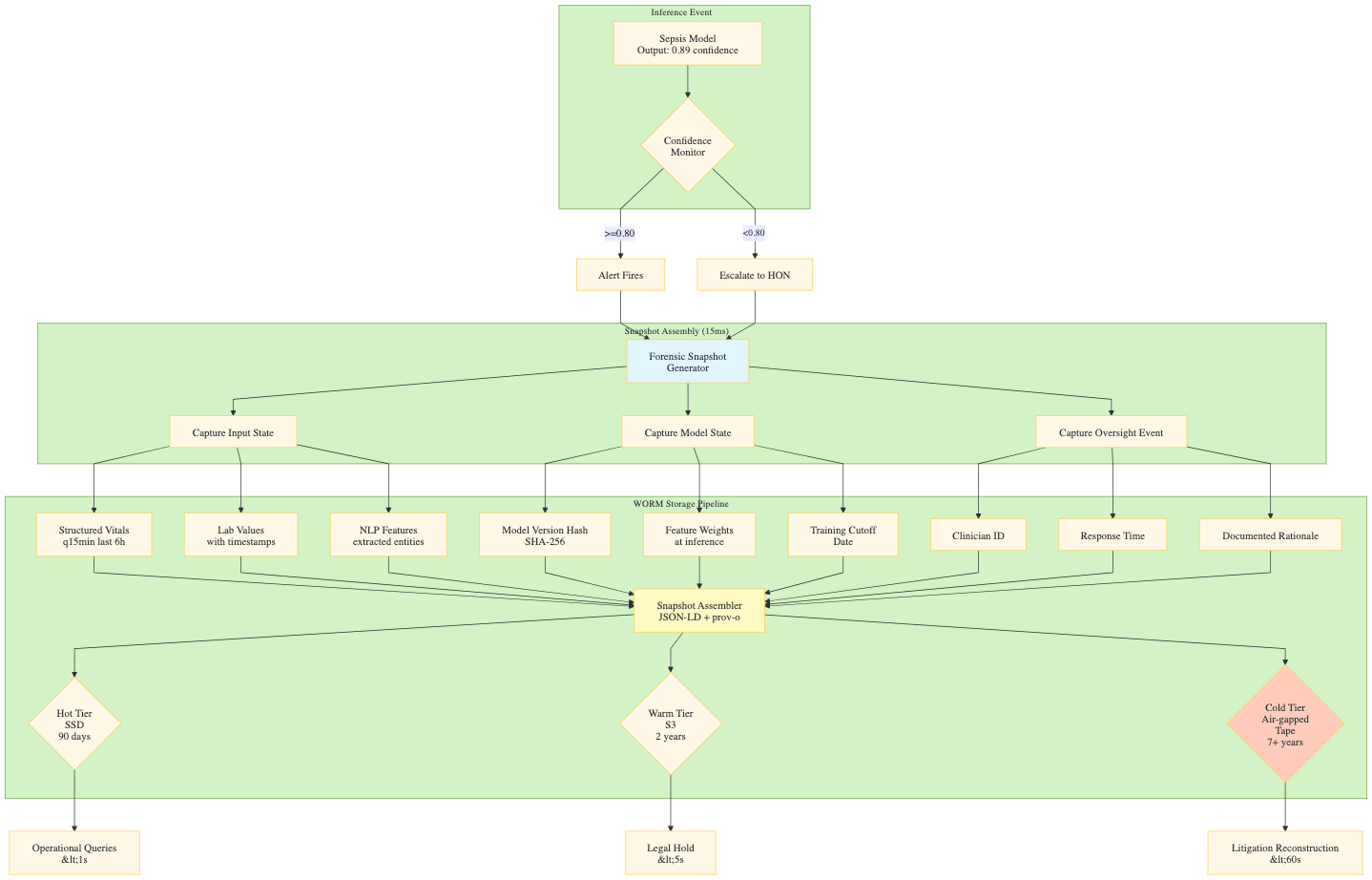

Component 1: The Forensic Snapshot (Article 12 Compliance)

Technical Architecture

Component 2: The Intervention Layer (Article 14 Human Oversight)

- Confidence Monitor Logic (Decision Flow)

- AUTO-PATH (Probability ≥ 0.80)

- ESCALATE-PATH (Probability < 0.80)

- Recursive Checking Steps (Pass 1-3)

- Human Override Node (HON) actions (Validate, Override, Escalate)

- Recursive Context Checking Detail (Table with Pass, Check, Logic, Latency)

- Confidence Monitor Logic (Decision Flow)

Component 3: Technical Nutrition Labels (Article 13 Transparency)

- Clinician Interface Design

- Traditional Alert vs. Technical Nutrition Label (with Primary Drivers, Model Confidence, Historical Accuracy, Suggested Actions)

- Clinician Interface Design

Component 2: The Intervention Layer (Article 14 Human Oversight)

Confidence Monitor Logic (Decision Flow)

The Sepsis Model output (e.g., Probability: 0.73) is routed through a Confidence Monitor (Threshold: 0.80) to determine the action path:

- AUTO-PATH (Probability ≥ 0.80): The alert fires immediately and is published to the EHR (Epic BPA Fires).

- ESCALATE-PATH (Probability < 0.80): The system initiates a Recursive Checking process to validate the signal.

Human Override Node (HON):

The Rapid Response Nurse receives a Context Package (full vitals trend, lab timeline, similar cases, suggested actions) and makes a decision:

- Validate: Alert Released to EHR.

- Override: Override Logged (Rationale Required), routed to Quality Review Queue.

- Escalate: Physician Notification and Code Sepsis Team activation.

Component 3: Technical Nutrition Labels (Article 13 Transparency)

Clinician Interface Design:

The Technical Nutrition Label provides necessary context to foster trust and inform decision-making, contrasting with a generic "Traditional Alert."

- Traditional Alert: Low context (e.g., "⚠️ SEPSIS ALERT, Score: 88"). Leads to low trust, encouraging ignoring or blindly following the recommendation.

- Technical Nutrition Label (High Context):

- Primary Drivers: Lists key input factors with weight and direction (e.g., 🔴 Lactate increasing: 3.0 → 4.2 mmol/L, Weight: 34%).

- Model Confidence: Provides explicit confidence level and accuracy calibration (e.g., 89% confident sepsis within 6 hours, Calibrated: 87% accurate).

- Historical Accuracy: Contextualizes performance for a similar patient cohort (e.g., For patients like this (Age 67+, CKD, DM): 92% sensitivity).

- Suggested Actions: Offers a checklist of next steps (e.g., ✓ Blood cultures x2, ✓ Broad-spectrum antibiotics, ✓ 30 mL/kg IV fluids).

- Outcome: Facilitates an informed decision and enables documented rationale.

Measured Outcomes

Quantitative Results

Metric | Baseline (Pre-DataMills) | Post-DataMills | Delta | Methodology |

Audit reconstruction time | 3-6 weeks (often impossible) | <2 seconds | -99.9% | Legal team simulation exercises |

False alert rate | 34% of all alerts | 13.6% of all alerts | -60% | Comparison of 10,000 alerts pre/post |

Nurse interruptions/shift | 4,000 across IDN | 1,600 across IDN | -2,400 | Epic audit log analysis |

Clinician trust score | 42% (self-reported) | 81% (self-reported) | +93% | Quarterly survey, n=340 clinicians |

Alert response time | 4.2 minutes median | 1.8 minutes median | -57% | Time from alert to acknowledgment |

Appropriate sepsis bundle initiation | 67% | 94% | +40% | Chart review of 500 cases |

Documentation completeness | 45% with rationale | 100% with rationale | +122% | Required field enforcement |

Engineering compliance time | 20% of sprint capacity | <2% (automated) | -90% | Jira time tracking |

End-to-end latency | N/A (no oversight layer) | 87ms p50, 118ms p99 | Baseline established | Distributed tracing |

Technical Specifications

Parameter | Specification |

|---|---|

Deployment model | Dedicated VPC per IDN, zero multi-tenancy |

Data residency | US-East (Virginia) primary, US-West (Oregon) DR |

EHR integration | Epic Hyperspace BPA, HL7 FHIR R4, SMART on FHIR |

Inference latency | p50: 45ms, p99: 87ms (model) + p50: 18ms, p99: 31ms (DataMills oversight) |

Snapshot write latency | Hot tier: <50ms, Warm tier: <200ms, Cold tier: async |

Storage capacity | 50M snapshots (hot), 500M (warm), unlimited (cold) |

Availability SLA | 99.99% excluding planned maintenance |

RPO/RTO | 0 / 4 hours for audit stream |

Encryption | AES-256-GCM at rest, TLS 1.3 in transit, HSM-backed keys |