The Engineering of Legal Truth: Navigating the EU AI Act in Production

Subject: Moving Compliance from a Legal Memo to the Production Stack

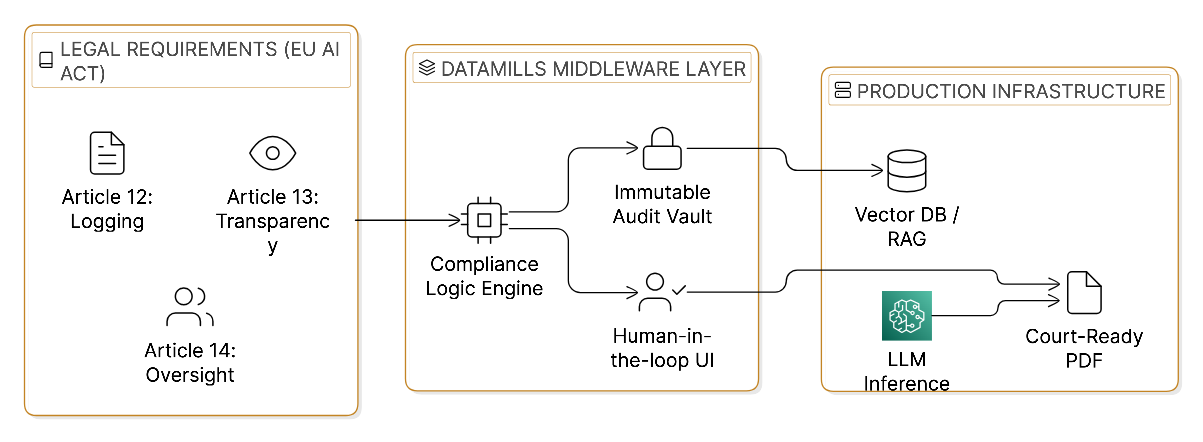

The EU AI Act is a complex set of legal requirements. To the architects and developers at DataMills, it is a technical specification for the future of software.

We have reached a critical juncture in the deployment of Artificial Intelligence. The timeline for compliance is no longer a distant theoretical. August 2, 2026, the full force of the Act is applied to High-Risk AI Systems (Annex III). This category specifically includes AI used in the administration of justice and legal services, the exact space where our engineering teams have spent years building high performance infrastructure. Currently organizations treat governance as a documentation exercise. If your AI handles medical data, legal decision support, or court bound evidence, you are no longer just building a product, you are building a regulated legal instrument.

At DataMills, we have seen a dangerous trend: organizations treating the EU AI Act as a "policy problem" to be solved by consultants with 100 page PDFs. But regulators don't audit your intentions; they audit your system states. If your production code doesn’t have a functional "Kill Switch" or an immutable logging pipeline, the policy is not enough to legalize the pipeline.

To bridge this "Consultant Gap," we have architected our systems including our previous builds for medical chronologies and legal automation to treat regulatory risk as a state management problem.

1. High Risk Realities: When Code Becomes a Legal Instrument

Under the EU AI Act framework, any system that significantly influences legal decisions or processes sensitive medical data for legal claims is classified as High-Risk. Specifically, AI systems intended for use by judicial authorities or in the "administration of justice" fall under the strict oversight of Annex III.

One of our core architectural challenges involved building a system that could ingest thousands of messy medical documents PDFs, X-rays, and ICD/CPD reports and transform them into structured, court ready timelines. When we built these internal systems for Medical Chronology and Demand Letter Generation, we recognized that our pipeline wasn't just "summarizing text." It was extracting ICD/CPD codes, calculating billings, and structuring a timeline of events that determines the outcome of a victim's case.

In this environment, the cost of an error isn't just a "bug" it's a legal liability. If you are wrapping a foundational model like GPT-4o via an API to handle these tasks, the law demands systemic resilience. You are the deployer; you own the risk of that model’s hallucinatory behavior. You cannot simply wrap a model in a basic UI and call it a day.

2. Article 12: Why "Forensic Snapshots" Beat Standard Logging

Article 12 of the Act mandates strict record keeping and traceability. Most engineering teams rely on ephemeral logs that rotate every 30 days. In a courtroom setting, showing a "grep" of a deleted log file is not a defense.In a legal context, this is a catastrophic failure.

In our in-house developed Vectorization Pipeline, which we designed for high precision information retrieval for lawyers and victims, a single query is often decomposed into multiple sub queries to ensure the LLM finds the most relevant medical evidence.

The DataMills Solution:

We treat every inference as a forensic artifact. We implement WORM (Write Once, Read Many) Storage to create an Immutable Audit Trail. For every chronology or demand letter generated, our infrastructure captures:

- Model Version Hash: The SHA 256 hash of the specific model weights used at the time of execution.

- Input Snapshot: The raw tensor data or JSON payload the model "saw" before any normalization.

- The Logic Path: A map of which vector clusters and prompts were activated to reach the final conclusion.

This ensures that if a medical fact is challenged in 2027, the system can provide a "Forensic Snapshot" of exactly how that conclusion was reached.

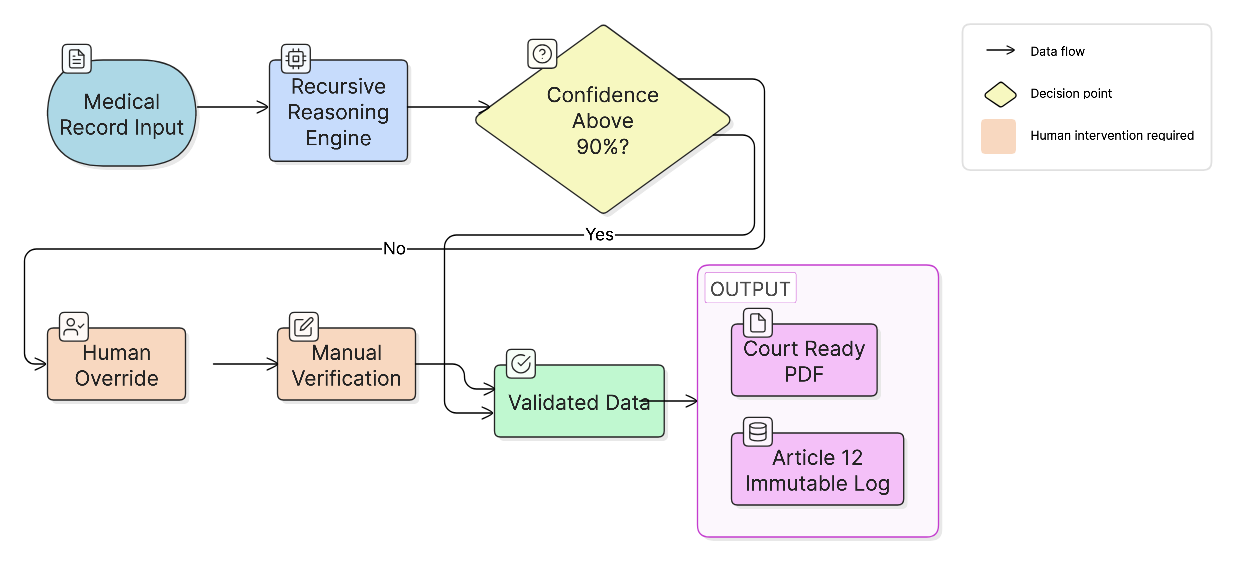

3. Article 14: Solving the "Doctor’s Handwriting" Paradox

Article 14 requires that High Risk AI be designed for effective human oversight. Many interpret this as a requirement to have a human check every single output. In a high volume legal practice, that is impossible.

A primary technical hurdle we solved was the interpretation of handwritten medical records. Doctors' notes are notoriously illegible, and traditional OCR often fails. An unmanaged AI might try to "guess" a dosage or a date based on context, leading to dangerous inaccuracies. An unmanaged LLM might look at a messy "10mg" and see "70mg." If that error reaches a Demand Letter, the lawyer is liable.

The DataMills Solution:

We built a distinct Intervention Layer. We don't just "use" an LLM; we wrap it in a Confidence Monitor.

- Contextual Reasoning: The system uses multiple recursive passes to attempt to decipher handwriting based on surrounding medical context and ICD codes.

- The Human Override Node: Instead of failing, the system routes the specific uncertain snippet to a human queue.

- Recursive Context Checking: The system attempts to resolve the ambiguity using other medical context (ICD codes, surrounding text) multiple times. Only as a last resort to protect the lawyer’s professional standing does it request manual clarification.

We treat Article 14 as a UI/UX ticket. It’s a functional circuit breaker that ensures the AI remains an assistant, not an unmanaged decision maker or risk.

4. Article 13: The Transparency Paradox in Legal PDFs

Article 13 requires that High Risk systems be transparent enough for users to interpret the output. However, in the legal world, a Demand Letter/Any Legal Document must be a "court ready" document. It cannot be littered with AI watermarks or technical disclaimers that make it look unprofessional to a judge or opposing counsel.

The DataMills Engineering Solution:

We separate the Authoritative Output from the Interpretability Metadata.

- The Output: A professional, structured PDF that meets court standards, focusing on billings, expenses, and medical facts.

- The Explainability API: Alongside the PDF, our system generates an internal Technical Nutrition Label. This allows the lawyer to see the "why" behind the "what." Using techniques like SHAP values, we show the lawyer exactly which data points in the input files triggered a specific finding in the Demand Letter.

This provides the transparency required by law while maintaining the professional authorial integrity required by the court.

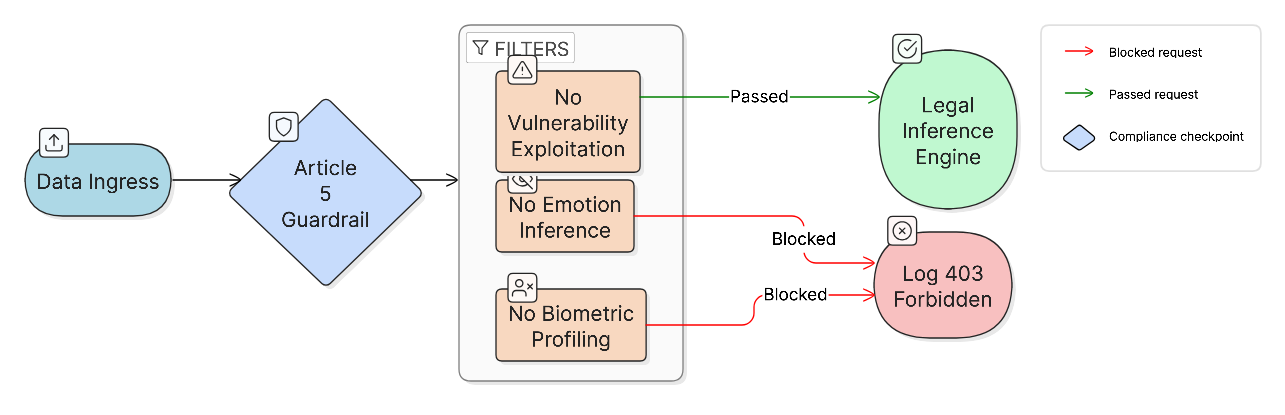

5. Article 5: Hard Guardrails at the API Gateway

Finally, Article 5 strictly prohibits "Prohibited Practices," such as AI that exploits vulnerabilities or uses biometric categorization in discriminatory ways. In a system handling sensitive victim data and medical records, the risk of "feature creep" into prohibited territories is real. When building information retrieval systems for victims, the risk is high if the model begins to profile a victim based on sensitive medical data.

The DataMills Solution:

We implement Input Guardrails at the API gateway level. Compliance isn't a post processing step; it's an ingress issue. Our infrastructure sanitizes payloads and blocks prohibited feature vectors before they ever reach the inference engine.

Furthermore, we use Role Based Access Control (RBAC) to ensure data siloing. In our victim retrieval pipelines, victims can only see documents explicitly released by their counsel. By baking these permissions into the infrastructure layer, we ensure that compliance with data sensitivity is a functional reality, not just a policy promise.

Moving Toward Operational Resilience

As we approach, the window for "Strategy" has closed. We are now in the Implementation Sprint.

The "Build vs. Buy" trap is the single biggest risk for engineering teams today. Do you want your Senior ML Engineers building "Compliance ETL Pipelines" and "Audit Logs," or do you want them optimizing your core models and reducing inference costs?

DataMills exists to offload this "Compliance Debt." We are the plumbers for high stakes industries. We provide the verified infrastructure the "Builder Bridge" that ensures your legal software isn't just fast, but legally resilient.

The window for "Strategy" has closed. We are in the implementation sprint. If your AI makes consequential decisions in the legal space, you are sitting on unmanaged risk. It’s time to move beyond the PDF and start building code that passes the test suite of the law.

At DataMills, we turn the liability of High Risk AI into the asset of Operational Resilience.