Operationalising High-Risk Healthcare AI: From Regulatory Burden to Competitive Advantage

TL;DR

DataMills enables healthcare organizations to deploy AI-assisted diagnostic and clinical decision support systems under full AI Act compliance without operational friction. By embedding regulatory infrastructure directly into production systems, we transform compliance from a documentation exercise into a runtime capability. Organizations reduce audit preparation from months to days while maintaining continuous inspection readiness.

The Challenge: When Clinical Innovation Meets Regulatory Reality

Healthcare organizations scaling AI across radiology, emergency triage, and clinical decision support face a critical inflection point. Model performance is no longer the primary bottleneck regulatory resilience is.

The AI Act's enforcement deadline for High-Risk AI Systems (Annex III) creates existential pressure for healthcare deployers. Organizations must demonstrate:

- Continuous risk monitoring (Article 9) with real-time drift detection

- Immutable data lineage (Article 10) proving training set representativeness

- Automated technical documentation (Article 11/Annex IV) generated from system state, not manual assembly

- Forensic-grade operational logs (Article 12) capturing every inference, override, and logic path

- Structured human oversight workflows (Article 14) that prevent automation bias without crushing clinical velocity

The DataMills Approach: Hard Coded Compliance

DataMills bridges the gap between legal text and functional implementation. We don't generate compliance documentation on instrument systems so evidence is produced automatically at runtime.

Our Sovereign Hybrid Stack deploys via Virtual Private Instances (VPC), siloing each client on the DataMills Private Cloud with zero data pooling for training. The architecture centers on three technical pillars:

1. The Immutable Audit Stream (Article 12 Compliance)

Standard logging rotates every 30 days. In a courtroom or regulatory inquiry, showing a "grep" of deleted logs is not a defense.

DataMills implements WORM (Write-Once, Read-Many) Storage to create Forensic Snapshots of every inference:

- Model Version Hash: SHA-256 hash of specific model weights used at execution

- Input Snapshot: Raw tensor data or JSON payload before normalization

- Logic Path: Map of vector clusters, prompts, and reasoning steps activated to reach conclusions

- Confidence Scores & Override Logs: Complete trace of clinician interventions

For diagnostic AI, this means every prediction from radiology segmentation to triage prioritization is fully reconstructible years later. When a medical fact is challenged, the system provides exact provenance: what the model saw, how it reasoned, and who validated it.

2. The Intervention Layer (Article 14 Human Oversight)

Manual review of every AI output is operationally impossible at scale. Unmanaged automation risks "automation bias," where clinicians defer to algorithmic recommendations without critical evaluation.

DataMills treats human oversight as a UI/UX and circuit-breaker problem, not a policy mandate. Our Confidence Monitor analyzes prediction uncertainty in real-time:

- High-confidence outputs: Proceed to EHR integration with automated logging

- Low-confidence or high-risk predictions: Trigger Human Override Nodes, routing specific decisions to clinical review queues with contextual explanation

- Recursive Context Checking: For ambiguous inputs (e.g., illegible handwritten notes), the system attempts resolution using surrounding medical context (ICD codes, previous records) before escalating to human verification

This ensures AI remains an assistant, not an unmanaged decision-maker, while maintaining clinical workflow velocity.

3. The Explainability API (Article 13 Transparency)

Regulatory transparency and professional presentation often conflict. A court-ready diagnostic report cannot be cluttered with AI watermarks or technical disclaimers yet lawyers and clinicians need to verify the "why" behind the "what."

DataMills separates Authoritative Output from Interpretability Metadata:

- The Output: Professional, structured clinical documentation meeting court and regulatory standards

- The Technical Nutrition Label: Internal SHAP-value analysis showing exactly which input features triggered specific findings, enabling clinicians to validate AI logic without compromising document integrity

This dual-layer approach satisfies both legal transparency requirements and professional presentation standards.

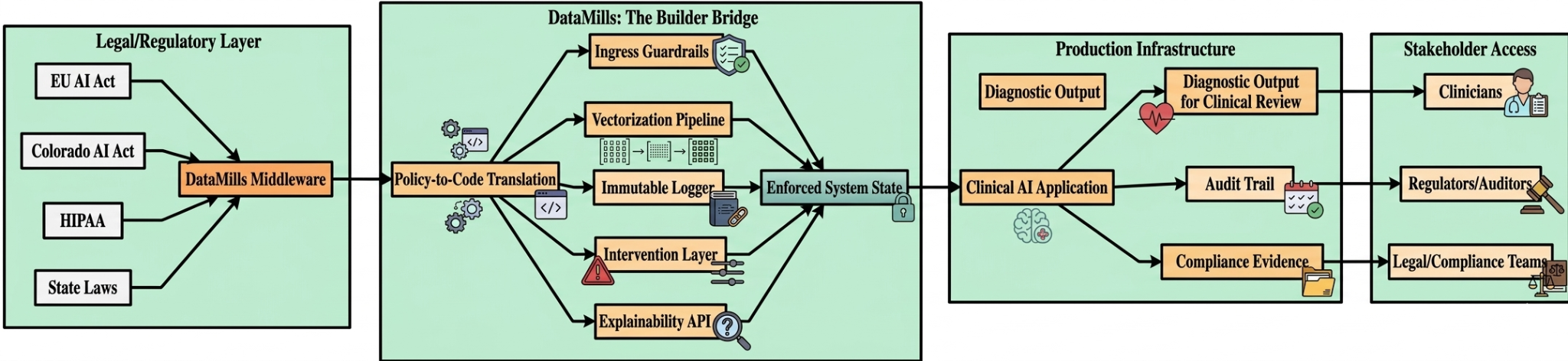

Technical Architecture: The Builder Bridge

DataMills operates as robust middleware positioned between foundational models and clinical deployment, specifically designed to address stringent regulatory requirements.

The core components of the DataMills architecture and their mapping to regulatory requirements are summarized below:

Component | Function | Regulatory Mapping |

|---|---|---|

Ingress Guardrails | API-gateway-level input sanitization blocking prohibited feature vectors (biometric categorization, emotion inference) | Article 5 (Prohibited Practices) |

Vectorization Pipeline | Query decomposition for high-precision information retrieval; bi-temporal dataset tracking for training state reconstruction | Article 10 (Data Governance) |

Immutable Logger | WORM storage capturing forensic snapshots with model hashes, input states, and logic paths | Article 12 (Record-Keeping) |

Explainability Engine | SHAP-based interpretability generating technical nutrition labels alongside clinical outputs | Article 13 (Transparency) |

Intervention Layer | Confidence monitoring with automated escalation to Human Override Nodes | Article 14 (Human Oversight) |

Zero-Retention LLM Interface | GPT-4o mini via Enterprise API with contractual zero-training/zero-retention guarantees | GDPR/HIPAA Data Processing |

Deployment Model:

Sovereign VPC isolation ensures no cross-client data leakage. Each instance maintains private vector databases (Pinecone/Weaviate) with Role-Based Access Control (RBAC)-enforced siloing. This ensures that victims see only documents explicitly released by counsel and clinicians access only assigned case files.

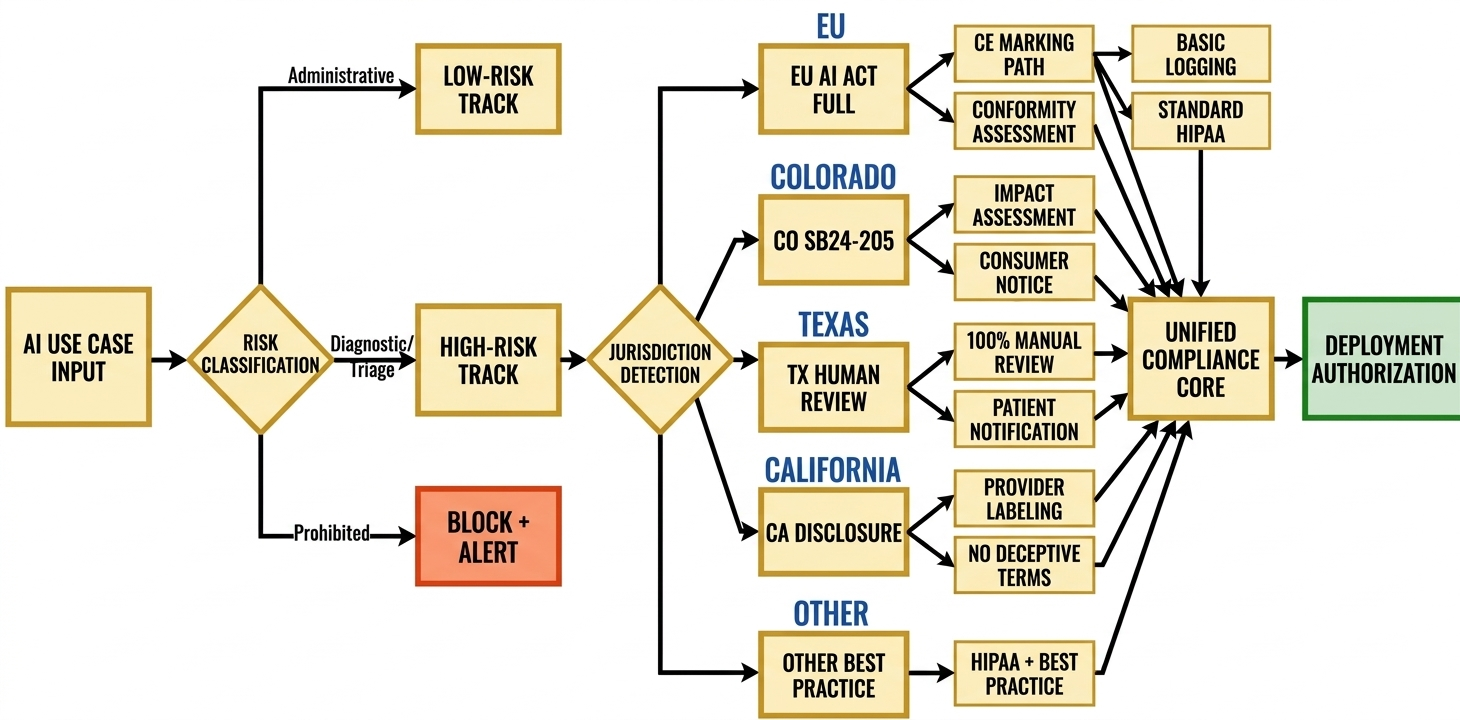

Global Compliance Integration

While the Individual AI Act provides the structural backbone, DataMills architectures are designed to adapt seamlessly to various jurisdictional overlays:

- Colorado AI Act (SB24-205): Includes automated impact assessment generation, demographic bias monitoring, and consumer notification workflows for "consequential decisions" in healthcare access.

- US State Patchwork (TX, CA, IL): Offers configurable human-in-the-loop requirements, patient disclosure modules, and algorithmic denial-review workflows.

- HIPAA: Provides Business Associate Agreement (BAA) templates, minimum-necessary enforcement at the vector storage layer, and breach-notification automation.

- GDPR: Facilitates Data Subject Access Request (DSAR) fulfillment via immutable audit trails, and the right-to-explanation through Technical Nutrition Labels.

The infrastructure utilizes geofencing for compliance logic by jurisdiction, enabling multi-national deployment without architectural fragmentation.

Outcomes: From Liability to Operational Resilience

Organizations deploying DataMills infrastructure achieve significant operational and compliance advantages:

- Audit Preparation: Preparation time is reduced from 4–6 weeks to under 72 hours through continuous, automated documentation generation.

- Drift Detection: Subpopulation performance monitoring identifies model degradation in under 24 hours, with automated safety interlocks pausing deployment when disparity thresholds are exceeded.

- Inspection Readiness: Systems remain audit-ready at all times, with forensic traceability established for every clinical decision.

- Malpractice Risk Reduction: Complete decision provenance protects against liability claims.

- Deployment Velocity: CI/CD compliance gating enables rapid model updates without regulatory re-certification delays.

About DataMills

DataMills bridges the gap between legal text and functional implementation. We serve as the Builder Bridge for high-stakes industries Healthcare, Legal Tech, and Fintech where AI failure is existential.

Our philosophy is simple: Regulators don't audit policies; they audit system states. We provide the middleware infrastructure that hard-codes compliance into production architecture, transforming regulatory burden into competitive advantage.

The window for strategy has closed. We are in the implementation sprint.

Ready to operationalize your High-Risk AI compliance?

Contact DataMills to assess your current architecture against EU AI Act, Colorado AI Act, and global healthcare AI requirements.